A Skills Race With a Hidden Flaw

The demand for AI skills is at an all-time high. Enrolment in AI courses has surged. Job descriptions have been rewritten. Companies are hiring engineers who can ship AI features fast. On the surface, this looks like progress. But underneath it, the AI skills gap is quietly widening.

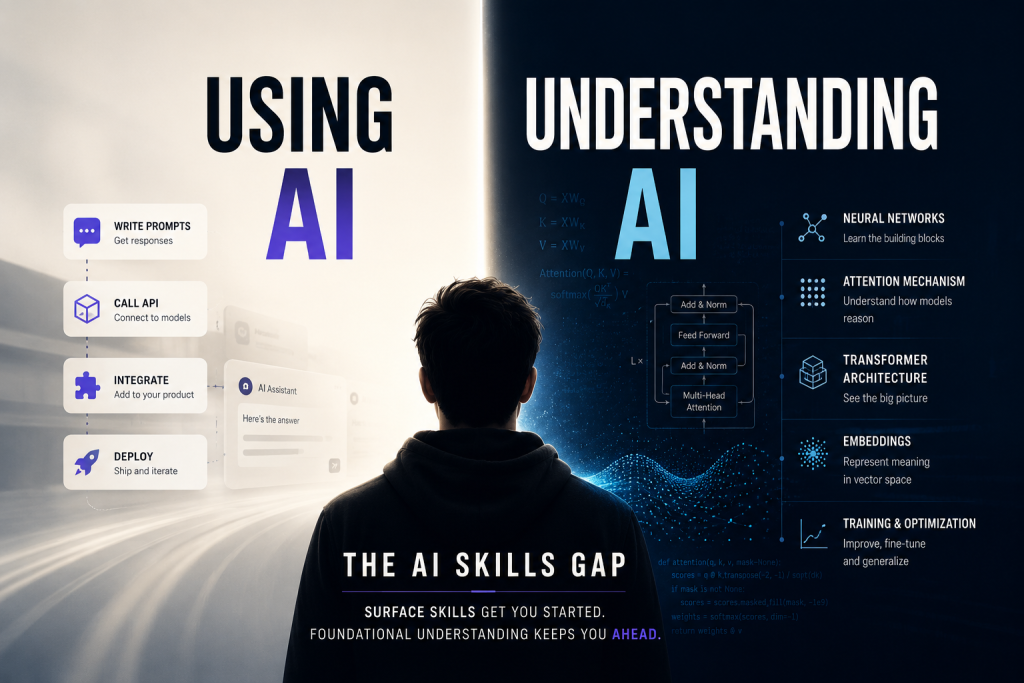

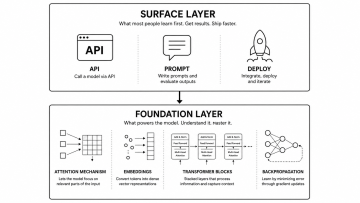

There is a difference between using AI and understanding AI. Most of the current skills race is focused entirely on the first one. This is where the AI skills gap begins to take shape, creating a structural problem that will only become visible when systems fail at scale.

This article is about the AI skills gap: what it looks like, why it matters, and what engineers can do about it.

What Most AI Education Teaches

The typical AI learning path in 2024 and 2025 looks like this:

- Connect to a model through an API

- Write prompts and evaluate outputs

- Integrate results into a product or pipeline

- Deploy, monitor, and iterate

This works. Businesses get value out of it. Products ship. Careers move forward. The ability to call a model and get something useful from it in an afternoon is genuinely powerful.

But this playbook has a ceiling. And professionals hit that ceiling the moment something goes wrong in production at scale.

What Happens When the System Breaks

When model outputs degrade unexpectedly, when latency spikes under load, when costs scale far beyond projections, when a retrieval pipeline starts returning irrelevant results at high volume, the engineer who learned only to call an API has nowhere to go.

You cannot debug what you do not understand. And if you have never looked inside the system, you are dependent entirely on documentation, vendor support, and guesswork.

The engineers who can actually diagnose these failures are the ones who understand the underlying mechanics: how attention handles long contexts, why tokenization affects reasoning on certain input types, what fine-tuning does to a model’s internal representations, how embedding distance breaks down in high-dimensional space.

These are not abstract academic topics. They are practical diagnostic tools. Engineers who have them can move fast with confidence. Engineers who do not have them are stuck waiting for the problem to solve itself.

The Questions That Separate the Two Groups

Understanding AI means being able to answer a different class of questions. Not how do I call this model but why does this model behave this way. Here are examples of questions that separate surface-level practitioners from engineers with genuine depth:

- Why does attention allow the model to connect distant tokens to each other?

- What happens to model performance when context length exceeds the training window?

- Why does increasing temperature produce more creative but less consistent outputs?

- What is a token, precisely, and why does tokenization affect arithmetic and structured reasoning?

- Why does fine-tuning on low-quality data cause catastrophic forgetting?

These questions have real answers. Engineers who know those answers can make informed decisions at each step of a system design. Engineers who do not know them are making guesses, even when the guesses look confident.

A Production Example: What Deep Understanding Changes

Consider building a conversational AI agent over a large document corpus: ten websites, over 500,000 documents, 15 gigabytes of content. The goal is precise, fast, contextually accurate responses at scale.

Getting to sub-second response times at that scale requires decisions that have nothing to do with prompt engineering. It requires understanding how embedding models represent semantic similarity, how retrieval systems degrade under high-dimensional noise, and how to structure document chunks so the context passed to the model is both dense and coherent.

None of those decisions can be made well without knowing what is happening inside the system. The prompt engineering layer is the last mile. The architecture underneath it determines whether the system is reliable or fragile.

This pattern repeats across production AI work. The surface layer is the visible part. The foundation layer is what determines whether the system holds up.

The Real Cost of Skipping the AI Skills Foundation

Most AI tutorials and bootcamps are optimized for speed. Get something working. Get it on your portfolio. Move on. This creates a large population of practitioners who can demonstrate AI capabilities but struggle to maintain, debug, or extend them under pressure.

When models are updated by vendors, they often start over. When infrastructure costs grow unexpectedly, they have no leverage to diagnose why. When a competitor builds something more reliable, they cannot identify what architectural choices created that reliability gap.

The engineers who will be most valuable as AI systems move into high-stakes production environments are not the ones who learned the fastest. They are the ones who learned deeply enough to make good decisions under uncertainty.

How to Close the AI Skills Gap Without Abandoning Practical Work

The two tracks are not mutually exclusive. You do not have to choose between shipping things and understanding them. The goal is to do both with intention.

Build at least one foundational system from scratch

Building a small transformer from scratch, even GPT-2 scale, forces you to confront every architectural decision: positional encoding, multi-head attention, layer normalization, the autoregressive objective. You cannot fake understanding when you have to implement it. This is a one-time investment that pays off in every production decision you make afterward.

Study failure modes, not just capabilities

Every time a system behaves unexpectedly, treat it as a research question. Why did the model hallucinate here? Why did retrieval return the wrong documents for this query? Why did fine-tuning hurt performance on this class of inputs? The answers to these questions compound into real engineering judgment over time.

Read original research, not just summaries

Papers like Attention Is All You Need, the BERT paper, and the original RAG paper are not long. They are precise. Reading them gives you vocabulary and intuition that no tutorial can replicate. You start to see the design decisions behind the tools you use every day, which changes how you use them.

Pair deep learning with real production constraints

Understanding is not useful in isolation. When you are optimizing a retrieval pipeline, pull up the math behind cosine similarity and ask whether it is the right metric for your embedding space. When you are tuning a model, think about what the loss function is actually optimizing. The connection between theory and practice is where engineering judgment develops.

What the Market Will Reward

There is a difference between engineers who can ship fast and engineers who can ship fast and know why each decision is correct. The first group is useful. The second group is irreplaceable.

As AI systems move out of demo territory and into environments where reliability and cost matter, organizations will increasingly need engineers who can design systems that hold up over time, who can diagnose failures without starting over, and who can adapt to new models and architectures without losing the reasoning behind their original design.

The engineers who can do this have something that cannot be acquired through tutorials alone. They have internalized enough of the foundations that their instincts are reliable under pressure.

A Practical Question Worth Sitting With

If the AI system your company depends on failed tomorrow and the vendor’s documentation gave you no answers, how far could you go? Could you trace the failure to a component you understand? Could you propose a fix without starting over?

If the answer is no, that is not a problem. It is information. It tells you exactly where the next level of your growth is waiting.

Everyone is learning AI right now. The engineers who will define the next decade are the ones learning how it actually works.

Author

Sujal Sokande builds production-scale intelligent systems at the intersection of deep learning, LLM deployment, and real-time AI applications. Currently studying Computer Science with a specialization in Artificial Intelligence at MIT-WPU, he has built GPT-2 from scratch, designed a conversational AI agent over a corpus of more than 500,000 documents, and ranked in the top 1% globally across 10,000+ competitors in international cybersecurity.

His work focuses on building AI systems that are not just functional at demo scale but reliable under real production constraints. He writes and speaks about the gap between using AI and understanding it because he believes that gap is the most consequential skill divide in technology right now.