Every software team has experienced the gut-drop moment right before a release. The builds passed. The staging environment looked fine. But something still feels off.

That persistent uncertainty isn’t an indication of weakness. It’s an indication that your QA stack isn’t operating at its maximum capacity.

More testing doesn’t automatically equal release confidence. It results from conducting appropriate tests, in the appropriate sequence, with the appropriate visibility.

Building a QA stack that genuinely improves confidence necessitates rethinking not just your tools. But it is the philosophy behind enforcing quality across the entire software delivery process.

Why Most QA Stacks Fall Short

The typical QA setup evolves organically. A team starts with unit tests, followed by an integration suite later, and a few end-to-end scripts when bugs are discovered.

The result is a maze of overlapping coverage, flaky tests, and a confusing picture of what “passing” truly implies.

When that happens:

- Engineers stop trusting the test suite

- Releases get delayed for manual verification “just in case”

- QA becomes an obstacle instead of an advantage

The absence of a logical stack with deliberate steps is the problem, not the individual tools.

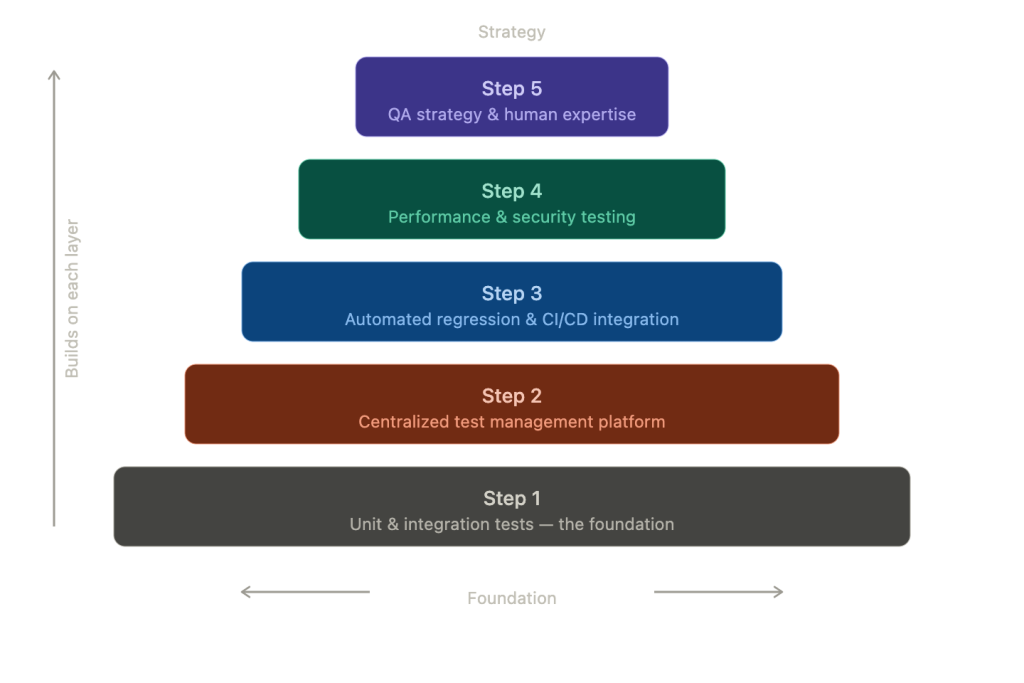

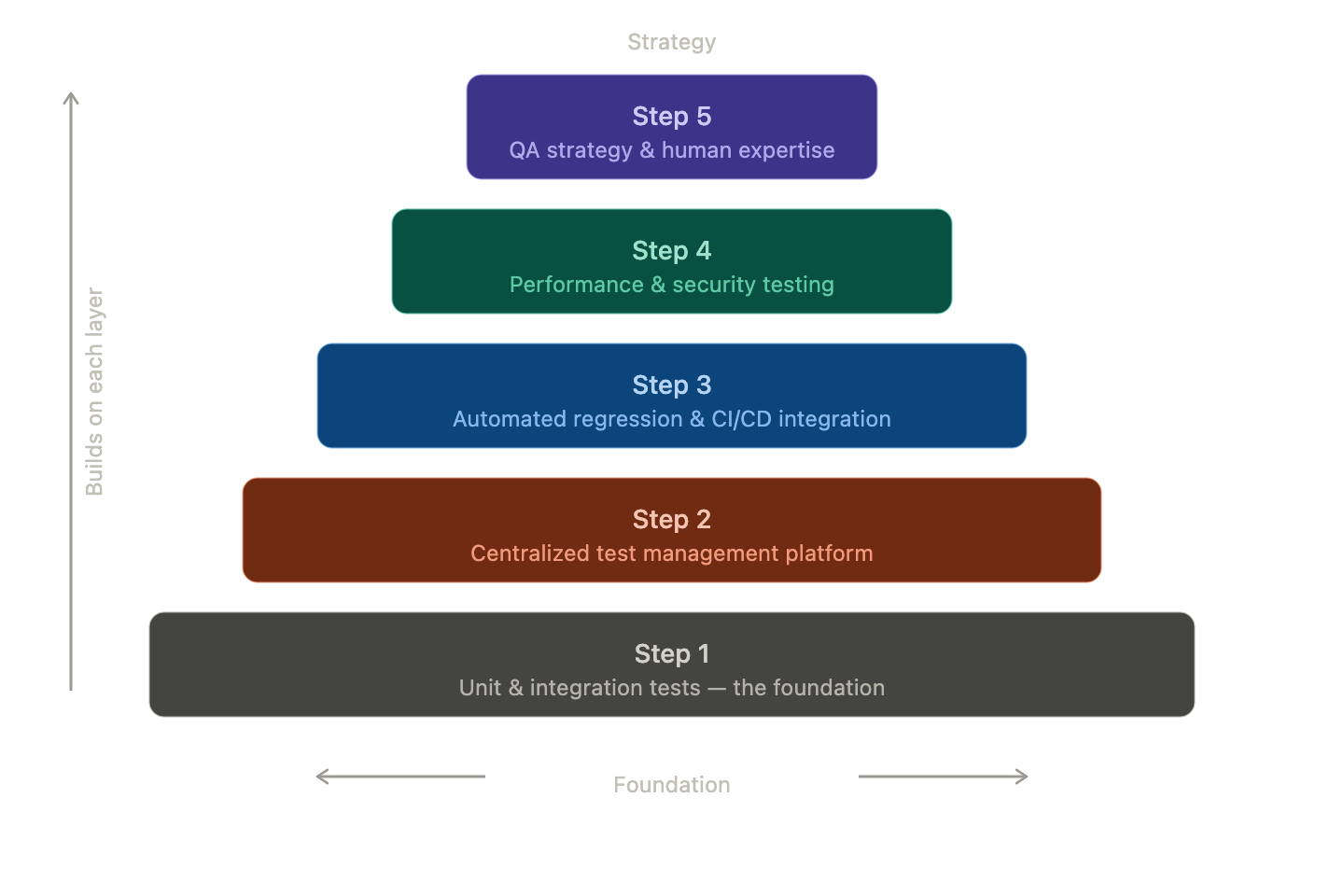

Step 1: Unit and Integration Tests as the Foundation

Your QA stack’s foundation should be developer-owned, deterministic, and fast.

The key rule here: keep it fast. Developers should stop using your unit suite locally if it takes longer than a few minutes, which breaks the feedback loop.

Furthermore, save longer-running tests for the CI process and strive for sub-5-minute execution on the entire unit layer.

Step 2: A Centralised Test Management Platform

Here’s where many teams lose the thread. Tests live in scattered repositories, spreadsheets, or individual minds. Regarding what has been tried, what hasn’t worked, and the coverage gaps, there isn’t a single source of truth.

A specially designed test management tool becomes essential in this situation. Platforms like Kualitee bring together to help solve this:

- Test case creation

- Execution tracking

- Defect management

Release confidence becomes a measurable metric when your team is able to tie test cases to requirements and link each release back to concrete coverage data.

Step 3: Automated Regression and CI/CD Integration

A QA stack outside your CI/CD process isn’t truly functioning. Automated regression suites need to run on every pull request and every merge, done as a gate rather than an afterthought.

The goal is a clear signal:

- Green means deployable

- Red means blocked

Flaky tests compromise this entirely. Before increasing coverage, put effort into removing instability. A smaller, reliable suite consistently outperforms a large, unreliable one.

Depending on your software application’s architecture, tools like Selenium, Playwright, or Cypress are useful at this step.

Step 4: Performance and Security Testing in the Process

Performance testing is typically handled by teams as a pre-launch task. That’s a mistake. Any feature modification has the potential to cause performance regressions. And discovering them a week before the launch creates needless frustration.

Lightweight checks to automate on every CI run:

- Response time thresholds

- Memory baselines

- Static analysis for security vulnerabilities

- Dependency scanning

Full load testing can run on a predetermined frequency or before a major milestone. The idea is to cease treating these as one-time events.

Step 5: QA Strategy and Human Expertise

Automation handles the repeatable. Human expertise handles the unpredictable.

Exploratory testing, edge case discovery, and usability judgment still require skilled QA professionals who understand both the product and the user.

This is where strategic QA consulting services add real value. They help teams:

- Audit existing progress

- Close coverage gaps

- Build QA strategies that scale with product complexity

When releases keep breaking despite green test runs, an outside perspective often surfaces the structural issues internal teams often overlook.

Metrics That Signal Real Confidence

A mature QA stack produces measurable outcomes. Track these:

- Defect escape rate: Hhow many bugs are found in QA compared to those that reach production?

- Test pass rate trend: Does stability get better or worse over time?

- Mean time to detect (MTTD): Hhow quickly does your stack surface issues after being introduced?

- Coverage-to-requirement ratio: Are your critical paths actually put to the test?

Release confidence follows when these statistics gradually go in the right direction.

Final Thoughts

The most complicated QA stack isn’t the one that increases release confidence. It’s the most deliberate one.

Each step serves a distinct function. Tests are transparent to all parties involved in the release decision, quick when speed is important, and comprehensive when thoroughness is important.

Teams that invest in this kind of structured approach stop dreading release day. They start owning it. What does your current QA stack look like, and where is your biggest bottleneck?

Author

Jasmine Dujazz is a UK-based Human-AI writer specializing in the intersection of fashion, digital art, entertainment, and gaming, powered by Ztudium’s AI.DNA technologies. She combines real-time data intelligence with cultural insight to decode emerging trends in virtual style, immersive media, and digital culture, delivering clear, engaging, and research-driven content that reflects the evolving landscape of creative technology and global innovation for modern audiences.