In the world that we live in, the power of big data is fundamental to success for any venture, whether a struggling start-up or a Fortune 500 behemoth raking in billions and looking to maintain its clout and footing. As a practicing or future data scientist, it requires technical and analytical skills, since you’ll be tasked with drawing insights from that data through manipulation and analysis and using those insights to make an impact for your company. You’ll essentially be tasked with solving real problems using that data, for example, how to capture a wider market demographic, or how to improve on product design.

With traditional methods of handling computational big data needs proving ineffective, and with the current class of programming languages not specifically built to handle large classes and varieties of statistical data intuitively, Python has become the go-to language for anyone practicing structured data science.

What is Python and What Are Its Applications?

According to experts from Google and The App Solutions, Python can be used for AI and machine learning, data analysis, developing mobile and desktop apps, testing, hacking, building web apps, and automating functions. Anyone can easily acclimatise to Python even if they are not programmers themselves due to its simplicity and ease of adaptation. Python’s syntax is simple-yet-powerful, and you can solve a lot of complex problems with simplified code. As it is itself based on the C language, Python has cross-compatibility with other languages. You can also develop applications for multiple OSs such as Mac, Windows, Linux, and Ubuntu. It has a huge community of users and contributors, and you can access information on Python from multiple forums such as GitHub, Stack Overflow, and Udemy etc.

The single biggest advantage of using Python is the huge number of libraries and associated frameworks that can be utilized within its ecosystem, allowing multiple applications from desktop, web, mobile etc.

Best Python Libraries for Data Science

Frameworks eliminate the need to rewrite code for tasks that are bound to recur. Django is a good example of a Python framework (and library) which eases the process of building web applications based on Python. A library is similar to a framework in that it allows you to perform recurrent functions without having to rewrite code. This comes quite in handy for data scientists who might not necessarily have a coding background or who are still new to working with Python.

With those definitions out of the way, here are the best python libraries for data science in 2019.

Pandas

This is a must-have tool for anyone trying to process tabular data in Python. It works with CSV, TSV, SQL databases, and other high-level data structures. It allows one to perform a variety of complex commands with few commands, and has other important functionalities such as sorting and grouping of data, and filling out missing data or time series.

NumPy

If you are working with lists, arrays, matrices, and multi-dimensional objects, NumPy is the best tool for you. It also boasts a vast collection of mathematical functions and special operators which can manipulate such complex data, making it extremely popular within the data science, statistical and general scientific and STEM community.

SciPy

This library builds upon Python Numpy, providing modules for data mining based on concepts such as linear regression, model selection, dimensionality, optimization, integration, clustering, and other complex engineering and scientific procedures.

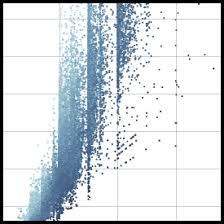

Matplotlib

No statistical and computational application would be complete without a way to visualize that data. With Matplotlib, you can plot charts, histograms, scatter graphs, etc. It easily integrates with other Python shells and runtime shells such as Jupyter Notebook.

Scikit Learning

This is best for data mining tasks and other high impact computational activities, for example, AI and machine learning. In object detection, it can be used for classification with models such as SVM (Support Vector Machines), model tuning and cluster analysis.

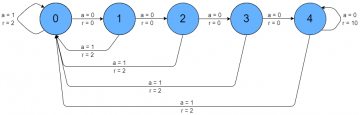

Pydot

Similar to Matplotlib, Pydot is also used to visualize data, though for much more complex graph structures such as in neural networks.

Keras

Keras is where statistics meets deep learning. It allows high-level neural network APIs integrated with your computations. It greatly simplifies working with neural networks and is built on top of Tensor Flow, Theano, and now, Microsoft’s Cognitive Toolkit (CNTK).

Bokeh

Bokeh is a visualization library, but it has back-end integrations which allow you to display high-impact and interactive visualizations, allowing some of the best presentations of data on websites.

Scrapy

A lot of times you’ll need to get data from webpages. Scrappy allows you to create spider bots which automatically collect and structure data from webpages, made even better with extensive API integration.

TensorFlow

Developed by Google, TensorFlow allows artificial and neural networks to work with large computational data sets, and integrated with Keras and CNTK. It is preferred because of multilayered nodes with high computational power that can be quickly trained and is famous for powering Google’s voice recognition system.

Plotly

If you need to integrate your data visualizations with another web-based API’s not necessarily built on Python, Plotly is the best tool to go for. It has different kinds of unique graphics for different functions.

Seaborn

One of the most popular python visualization libraries, Seaborn is used to plotting complex statistical models. You can plot complex models such as time-series and joint plots, with an added Matplotlib back-end integration.

Gensim

Gensim is used for vector space and topological modeling and is also the most popular Python tool for handling unstructured text. It checks the text for statistical inferences and patterns and reproduces succinct semantics or plain text which can then be handled by other applications such as NumPy.

Natural Language Toolkit (NLTK)

This is also very useful for natural language processing and pattern recognition tasks, and which can be used to develop cognitive models, tokenization, tagging, reasoning and other tasks useful to AI applications.

PyBrain

If you are new to the field of data science and need a way to do advanced research with real-life algorithms (say with a background in Matlab), PyBrain is just for you.

There are numerous other Python frameworks and libraries that you could use as a data scientist. These 15, however, will ensure you cover all your bases as a future data scientist.

Contributed content

Founder Dinis Guarda

IntelligentHQ Your New Business Network.

IntelligentHQ is a Business network and an expert source for finance, capital markets and intelligence for thousands of global business professionals, startups, and companies.

We exist at the point of intersection between technology, social media, finance and innovation.

IntelligentHQ leverages innovation and scale of social digital technology, analytics, news, and distribution to create an unparalleled, full digital medium and social business networks spectrum.

IntelligentHQ is working hard, to become a trusted, and indispensable source of business news and analytics, within financial services and its associated supply chains and ecosystems