Flinders University medical researchers emphasize the urgent necessity for regulatory frameworks from both government and industry to safeguard the health and wellbeing of our communities. They conducted tests on Generative AI, and the technology fell short of expectations.

Rapidly evolving Generative AI, the cutting-edge domain prized for its capacity to create text, images and video, was used in the study to test how false information about health and medical issues might be created and spread – and even the researchers were shocked with the results.

In the study the team attempted to create disinformation about vaping and vaccines using Generative AI tools for text, image and video creation.

In just over an hour, they produced over 100 misleading blogs, 20 deceptive images, and a convincing deep-fake video purporting health disinformation. Alarmingly, this video could be adapted into over 40 languages, amplifying its potential harm.

Bradley Menz, first author, registered pharmacist and Flinders University researcher says he has serious concerns about the findings, drawing upon prior examples of disinformation pandemics that have led to fear, confusion and harm.

“The implications of our findings are clear: society currently stands at the cusp of an AI revolution, yet in its implementation governments must enforce regulations to minimise the risk of malicious use of these tools to mislead the community,” says Mr Menz.

“Our study demonstrates how easy it is to use currently accessible AI tools to generate large volumes of coercive and targeted misleading content on critical health topics, complete with hundreds of fabricated clinician and patient testimonials and fake, yet convincing, attention-grabbing titles

“We propose that key pillars of pharmacovigilance – including transparency, surveillance and regulation – serve as valuable examples for managing these risks and safeguarding public health amidst the rapidly advancing AI technologies,” he says.

The research investigated OpenAI’s GPT Playground for its capacity to facilitate the generation of large volumes of health-related disinformation. Beyond large-language models, the team also explored publicly available generative AI platforms, like DALL-E 2 and HeyGen, for facilitating the production of image and video content.

Within OpenAI’s GPT Playground the researchers generated 102 distinct blog articles, containing more than 17,000 words of disinformation related to vaccines and vaping, in just 65 minutes. Further, within 5 minutes, using AI avatar technology and natural language processing the team generated a concerning deepfake video featuring a health professional promoting disinformation about vaccines. The video could easily be manipulated into over 40 different languages.

An Urgent Need For Robust AI Vigilance

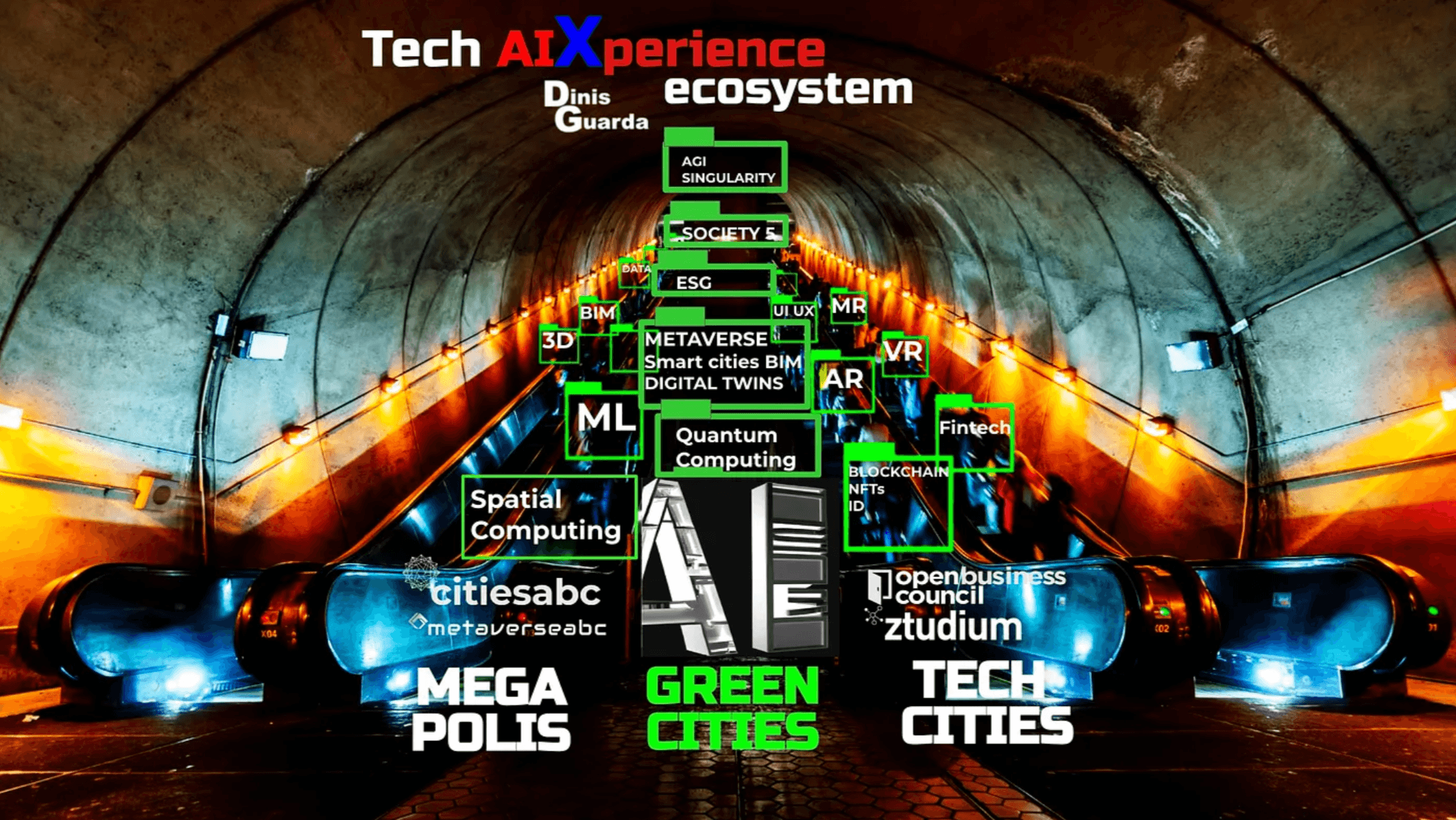

As Dinis Guarda, author and founder of openbusinesscouncil, mentioned in a recent article: “Most of AI top companies and fathers of artificial intelligence are admitting they partly lost already control on the velocity of the tools that came out of this great set of tech. Now the challenge is how society, business leaders can leverage the innovation of these powerful tools to empower humanity and businesses.”

The investigations, beyond illustrating concerning scenarios, underscore an urgent need for robust AI vigilance. It also highlights important roles healthcare professionals can play in proactively minimising and monitoring risks related to misleading health information generated by artificial intelligence.

Dr Ashley Hopkins from the College of Medicine and Public Health and senior author says that there is a clear need for AI developers to collaborate with healthcare professionals to ensure that AI vigilance structures focus on public safety and well-being.

“We have proven that when the guardrails of AI tools are insufficient, the ability to rapidly generate diverse and large amounts of convincing disinformation is profound. Now there is an urgent need for transparent processes to monitor, report, and patch issues in AI tools,” says Dr Hopkins.

The growing landscape overwhelms changing society as a whole. The imperative is to act and collaborate with all of society, including governments, policymakers, universities, and business peers and leaders. The goal is to prevent Humanity from following the path of Prometheus and facing punishment from Zeus or some predetermined destiny for stealing the fire of its own humanity to give rise to new forms of AI-created “mankind.”

Recognizing this responsibility involves a careful examination of AI strengths and weaknesses, along with the identification of specific threats and opportunities. This introspection sets the course towards a better world and ethical solutions, guiding considerations for businesses and organizations.

In the words of Kush Varshney, Distinguished Research Scientist & Senior Manager at IBM Research, “AI is not a race, it’s a journey. We need to take care, slow down, put in guardrails, and implement the right checks and mitigations so that we have an AI system we’re happy with that is operating safely.”

Contemplating AI necessitates the design of new working habits, societal structures, and work strategies. This comprehensive approach encompasses education, the reshaping of physical, organic, and business experiences, and a focus on scaling human interactions and models.

The paper – ‘Health Disinformation Use Case Highlighting the Urgent Need for Artificial Intelligence Vigilance’ – by Bradley D.Menz, Natansh D. Modi, Michael J.Sorich and Ashely M.Hopkins was published in JAMA Intern Med.

Hernaldo Turrillo is a writer and author specialised in innovation, AI, DLT, SMEs, trading, investing and new trends in technology and business. He has been working for ztudium group since 2017. He is the editor of openbusinesscouncil.org, tradersdna.com, hedgethink.com, and writes regularly for intelligenthq.com, socialmediacouncil.eu. Hernaldo was born in Spain and finally settled in London, United Kingdom, after a few years of personal growth. Hernaldo finished his Journalism bachelor degree in the University of Seville, Spain, and began working as reporter in the newspaper, Europa Sur, writing about Politics and Society. He also worked as community manager and marketing advisor in Los Barrios, Spain. Innovation, technology, politics and economy are his main interests, with special focus on new trends and ethical projects. He enjoys finding himself getting lost in words, explaining what he understands from the world and helping others. Besides a journalist, he is also a thinker and proactive in digital transformation strategies. Knowledge and ideas have no limits.