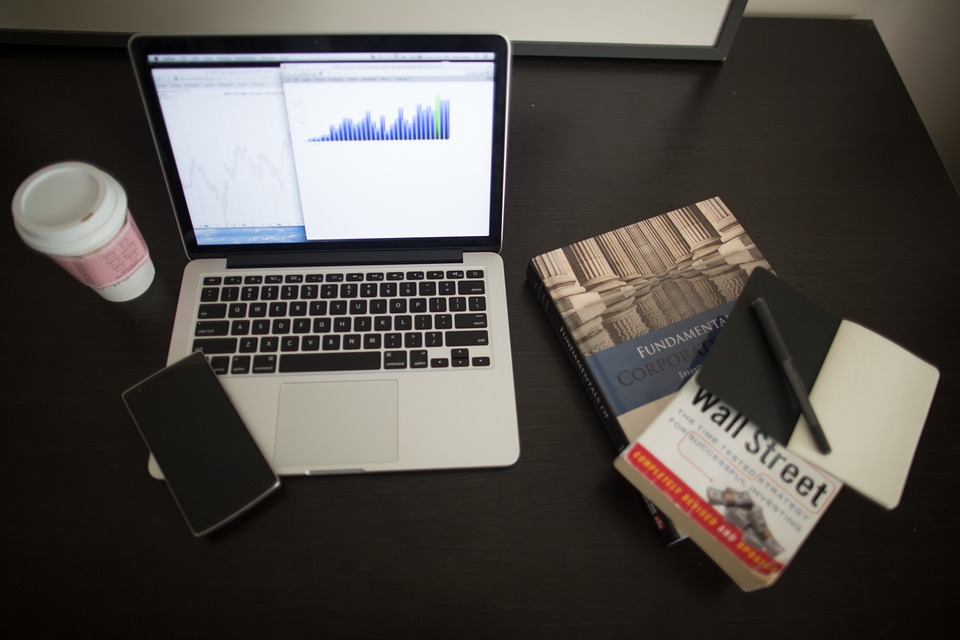

If you’ve been keeping up with enough business blogs and journals, then you’ve probably noticed the term big data come up a lot. The modern business arena is a buzz with this new concept, which is completely warping the way researchers and analysts work within marketing. But what is big data? Some people will tell you it’s a gimmick that’s going to come and go in a flash. Others will say that it’s the biggest thing to happen to marketing in centuries. If you ask me, neither is true! Here, I’ve put together a simple introduction to the concept of big data, and what it means for your business. However, if you see that you are not yet ready to set up a whole structure for data management on your own, you can always count on Big Data services. These specialized companies will help you implement the tools and methodologies necessary to get the most out of the data generated by your company.

So, what is big data? I’ll admit with some embarrassment that when I first heard the term, I thought it referred to a certain category of large databases. Actually, there’s no set capacity a database needs to reach in order to be qualified as “big”. What defines big data is the necessity for certain new technologies and tech to process the information in a given database. In order to manage and utilise big data, a company must have access to programs which span several physical and virtual machines. In a big data system, these all work together in order to process huge amounts of information in a convenient timeframe.

As you can imagine, getting all these devices to work together efficiently can be a pretty complex task. In order to set up such a system, businesses need to apply some very sought-after programming techniques. It’s almost always faster for programs to access data which is stored on local drives rather than on a cloud network. Due to this, the way data is spread out over a cluster and how these different machines are linked to one another is a very important factor. When it’s all properly streamlined, a big data system gives businesses valuable insights into how their customers are behaving and emerging trends in the market.

Now you’re probably wondering how big data is analysed. There are a variety of answers to that question, but as you become more involved with data analytics you’ll see some clear trends emerging. One of the most popular methods for it is known as MapReduce – a programming model which allows massive sets of data in a Hadoop cluster to be scaled down to more manageable files.

People are then able to compute functions and formulae across multiple computers all at once. As the name suggests, the MapReduce model is divided into two main parts. The “Map” refers to the function of filtering and categorising a given mass of data. The “Reduce” scales the information down, brings it all together and provides the user with a summary of it. You can head to Simplilearn to find out a little more about Hadoop and its relevance to big data.

Next up, the tools used to analyse big data. Again, there are many different programs which fall into this category, with some companies having their programs made from scratch just to fit in with their databases. However, if your operation is new to the whole practice, you’ll probably be using the most popular and established tool – Apache Hadoop.

Hadoop, like any other program of its kind, serves to store and process data on a large scale. The main thing setting it apart from its rivals is that it’s totally open source. Furthermore, it’s been shown to run smoothly on a lot of common hardware. This means that if you’ve got an existing database being used at your company, you won’t need to go through a complete overhaul to start utilising it. It can also conduct all its analysis purely from the cloud if this is a big part of your business’s data. The program is broken down into four main functions. First, there’s the Hadoop Distributed File System.

This is a DFS made specifically for high-aggregate bandwidth . Then there’s YARN. This is a platform you use to manage the Hadoop resources you’re using, and schedule programs you need to run on its infrastructure. After that there’s a library of common modules which you may want to use, and MapReduce which we’ve already covered. There’s a lot more to learn about Hadoop of course, and the best way to find out about it is bringing it into your company. For the large majority of companies, it’s the best tool for analysing big data.

Another tool which you may be interested in, also from Apache, is Spark. On the surface, Spark is more of the same with go-faster stripes. However, there are a few traits which make all the difference for some business owners. One of the biggest marketing points for Spark is that it stores most of its data on memory, rather than on disk. This means that the process for certain kinds of analysis is made much faster. Some analysts have even said Spark makes some processes up to 100 times faster. This tool can be used easily in conjunction with the Hadoop Distributed File System. However, IBM hasn’t “done an Apple” by restricting you to this one option. Other data stores, such as Cassandra and Openstack Swift work well when you throw Spark into the mix. Furthermore, you won’t have much trouble running Spark on one local machine. This has made testing and improvement much easier for a lot of companies. While these big names hold a fairly prominent position in the market, they only really make up the tip of the iceberg. There are many other tools which you could use for your big data system. Many of them have been designed for use with specific hardware and industries, so get out there and do some research!

I hope this feature has given you a much clearer idea of what big data is and what it means for your business. From now, the rest of your education is going to be through hands-on experience, trial and error. Apply it in the right way, and your big data system will give you market insights you never would have dreamed of!

IntelligentHQ Your New Business Network.

IntelligentHQ is a Business network and an expert source for finance, capital markets and intelligence for thousands of global business professionals, startups, and companies.

We exist at the point of intersection between technology, social media, finance and innovation.

IntelligentHQ leverages innovation and scale of social digital technology, analytics, news and distribution to create an unparalleled, full digital medium and social business network spectrum.

IntelligentHQ is working hard, to become a trusted, and indispensable source of business news and analytics, within financial services and its associated supply chains and ecosystems.